How Banana Pro AI Fits Quality Control

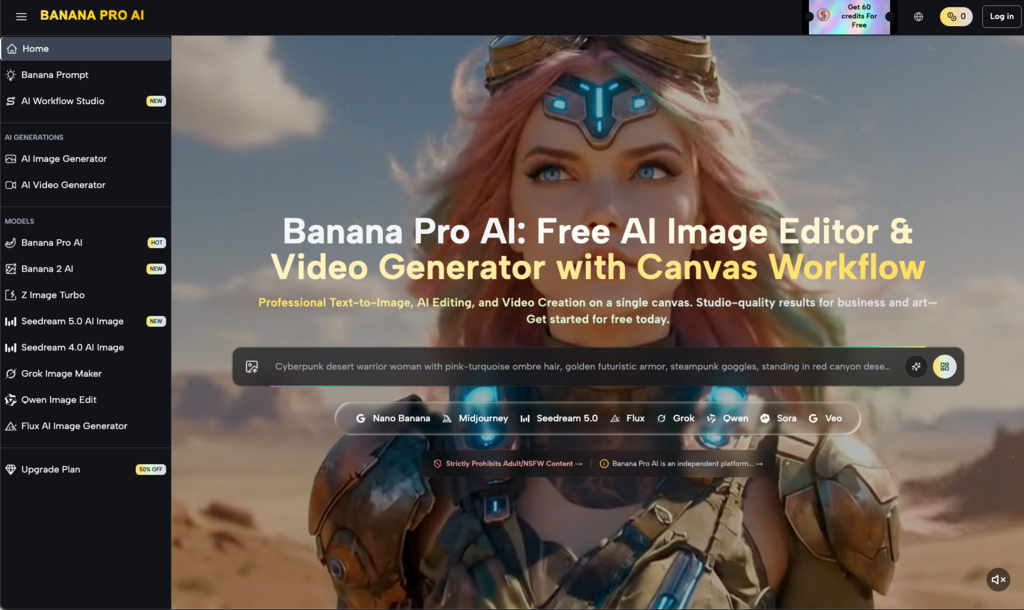

The shift in AI-assisted creative production has moved rapidly from the “novelty” phase to a standard operational requirement. For marketing teams and independent creators, the challenge is no longer just generating a high-fidelity image; it is generating that image in a way that remains consistent across an entire campaign. When a workflow involves multiple stakeholders, the “randomness” typically associated with generative models becomes a liability rather than a feature. This is where the integration of Nano Banana Pro into a production pipeline changes the dynamic from simple prompting to structured asset management.

Quality control in an AI-driven environment is less about the initial output and more about the “editability” of that output. If a creator cannot tweak a specific shadow, adjust a texture, or maintain a character’s likeness across several frames, the tool remains a toy. By utilizing a dedicated AI Image Editor alongside high-speed generation models, teams are finding ways to bridge the gap between a raw prompt and a finalized, brand-compliant creative asset.

The Efficiency of Nano Banana Pro in High-Volume Iteration

In a traditional agency setting, the “mood board” phase can take days. With the advent of tools like Nano Banana, this process has shrunk to minutes. However, the speed of Nano Banana can be a double-edged sword. Generating fifty variations of a concept is useless if none of them align with the technical requirements of the final deliverable. The professional implementation of Nano Banana Pro focuses on narrowing the variance of these outputs.

Operators use these tools not just for the final “hero” image, but to build a library of components. For instance, a performance marketer might need a specific background for a product shot. Instead of hoping the model gets the product right, they generate the environment first. This modular approach allows for much higher quality control because the AI is not being asked to solve five problems at once (lighting, product accuracy, composition, brand colors, and depth of field). By isolating the generation task, the Banana Pro ecosystem allows for a cleaner handoff to the design team.

It is worth noting, however, that even with advanced systems, there is a persistent limitation in how these models handle specific spatial relationships. If a campaign requires a very specific interaction—such as a hand holding a specific, non-standard mechanical tool—the AI Image Editor becomes the essential secondary step. Relying purely on the generation phase for complex physical interactions often leads to “uncanny valley” results that fail a rigorous quality audit.

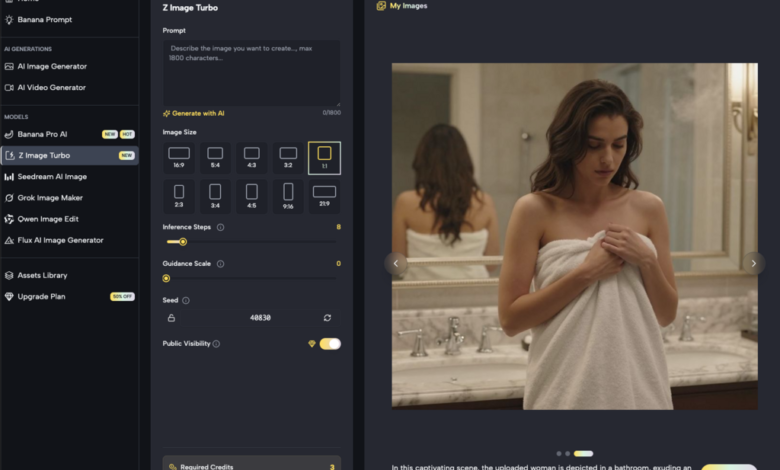

Integrating the AI Image Editor into the Canvas Workflow

The most significant bottleneck in AI creative work has historically been the “all or nothing” nature of the output. If 90% of an image was perfect but the reflection on a window was skewed, the creator often had to start over. The “Canvas” approach utilized by Banana AI shifts this paradigm. By bringing the generated assets into a workflow studio environment, the editor allows for localized changes.

This “in-painting” and “out-painting” capability is the backbone of professional quality control. It allows a team to lock in the parts of an image that work and only iterate on the problematic segments. From a practical standpoint, this reduces the “compute waste” where a creator generates hundreds of images looking for one perfect shot. Instead, they generate one “mostly right” shot and use the Banana Pro tools to refine the details.

The Role of Image-to-Image in Brand Alignment

For marketers, brand alignment usually comes down to color palettes and “vibe” consistency. The “Image-to-Image” feature within the Banana Pro AI suite is frequently used as a control mechanism. By uploading a brand’s existing photography or a basic sketch, creators can guide the Nano Banana model toward a specific composition. This acts as a visual “rail” that prevents the AI from deviating too far into creative directions that don’t serve the client’s brief.

However, transparency is required here: while Image-to-Image transformations are powerful, they are not a perfect “filter.” There is an inherent uncertainty in how the model interprets the “weight” of a reference image versus the text prompt. Occasionally, the AI might prioritize the textures of the reference while ignoring the structural requirements of the prompt, or vice versa. This requires a human operator to adjust the “denoising strength” or influence sliders—a skill that separates professional AI operators from casual users.

Maintaining Video Consistency with Banana Pro

As brands move from static images to motion, the quality control hurdles multiply. A slight flicker or a shifting background can ruin a 5-second social ad. The current workflow involves generating a high-quality “anchor” image using Nano Banana Pro and then utilizing that as the seed for the AI video generator. This ensures that the aesthetic remains grounded in the approved static asset.

Quality control in video is currently in a state of rapid evolution, and it remains one of the more challenging areas for creators. We must be realistic: creating a 30-second seamless narrative with perfect temporal consistency is still a high-friction task. Most successful teams are using Banana AI to create short, 2-to-4-second atmospheric clips that are then edited together in traditional software. Trying to force the AI to generate a complex, long-form sequence in one go usually results in a loss of quality that most professional brands will not accept.

The Tactical Advantage of a Unified Workflow Studio

One of the biggest drains on creative productivity is “tool hopping.” Moving an image from a generator to a separate editing suite, then to a different tool for upscaling, and finally to a video tool creates a fragmented workflow. This fragmentation is where version control issues usually start. One person might be working on an un-upscaled version, while another is editing a version with a different aspect ratio.

The “Workflow Studio” model aims to solve this by keeping the asset within a single environment. When the generation, editing, and video components are all under the Banana Pro umbrella, the metadata and original “seed” values are easier to track. This visibility is vital for quality control. If a team lead needs to know why a certain character looks different in a video clip compared to the initial ad, they can look back at the prompt history and the specific model used (whether it was Seedream 5.0 or a specialized version of Nano Banana).

See also: Advancing Patient Care Through Modern Infusion Technologies

Handling High-Resolution Requirements

A common pitfall for new AI creators is generating assets that look great on a small preview screen but fall apart when scaled for a website hero section or a physical print. Quality control must include a rigorous upscaling and sharpening phase. The built-in tools in the Banana Pro ecosystem allow for this scaling without the “hallucination” of extra details that often occurs in third-party upscalers.

The goal is to enhance the existing pixels rather than invent new ones. This is a subtle but important distinction for brand safety. If an AI upscaler starts adding texture to a smooth product surface, it has failed the quality control test. Professionals often prefer a slightly softer, more accurate image over a hyper-sharp one that has been “over-imagined” by a poorly tuned model.

Practical Limitations and the “Human-in-the-Loop” Necessity

Despite the advancements in Nano Banana and the broader Banana AI suite, it is essential to reset expectations regarding “automated” quality. AI is not a set-it-and-forget-it solution for creative departments. It is an “accelerator.”

One significant limitation involves text rendering within images. While models are improving, they still struggle with specific typography or long strings of text. A brand manager expecting the AI to perfectly render a slogan on a billboard will likely be disappointed. The current professional standard is to generate the billboard as an environment and then use the editor or traditional design software to overlay the typography.

Furthermore, there is the issue of “prompt drift.” In a large campaign where multiple people are generating assets, the interpretation of a prompt like “minimalist kitchen” can vary wildly depending on the specific model version or the weight of certain keywords. Quality control in this context requires a “prompt engineer” or a creative director who sets the “Master Prompt” and specific parameters that everyone must follow to ensure the Banana Pro outputs look like they belong to the same family.

Conclusion: Building a Repeatable Pipeline

Quality control is ultimately about repeatability. Any tool can generate a “lucky” image once. A professional tool is one that can generate a specific result, on demand, a hundred times over. By combining the rapid generation of Nano Banana Pro with the precision of a dedicated editor, teams are finally able to treat generative AI as a reliable part of their tech stack.

The workflow of the future is not a single prompt; it is a layered process. It starts with a vision, moves through a high-speed iteration phase with Nano Banana, gets refined in the canvas, and is finally scaled for production. Throughout this journey, the human operator remains the final arbiter of quality, using the Banana Pro AI tools to remove the drudgery of manual creation while maintaining the strict standards of modern marketing. As these tools continue to evolve, the teams that focus on “editability” and “consistency” rather than just “output” will be the ones that successfully integrate AI into their long-term creative strategies.